- Bug bounty hunters

- Pentesters

- Security engineers

- CTOs

- Cybersecurity students

Introduction: - Why Manual Pentesting Is No Longer Enough

For over a decade, manual penetration testing has been treated as the gold standard of cybersecurity. Organizations hired elite ethical hackers, ran yearly assessments, received thick PDF reports, and believed they were secure.

That model worked—until it didn’t.

In 2026, the cybersecurity landscape has changed faster than human testing alone can handle. Modern applications ship code weekly, expose hundreds of APIs, rely on cloud-native infrastructure, and integrate third-party services at scale. Meanwhile, attackers no longer operate manually either—they use AI-driven automation that never sleeps.

The result?

A dangerous mismatch between how fast vulnerabilities appear and how slowly traditional pentesting operates.

This isn’t about humans becoming irrelevant. It’s about manual pentesting no longer being sufficient as a primary defense. Agentic AI has fundamentally changed how vulnerabilities are discovered, validated, and exploited—both by attackers and defenders.

And if you’re still relying on point-in-time assessments, your security posture is already outdated.

- Agentic AI Pentesting is a cybersecurity approach where autonomous AI agents continuously discover, validate, and chain vulnerabilities using attacker-level reasoning—unlike traditional scanners or point-in-time manual testing.

Part 1: Why Manual Pentesting Is Becoming Obsolete

1. The Scale Problem: Humans vs 30,000 Vulnerabilities a Year

Modern security teams face a brutal reality:

~30,000 new vulnerabilities are published every year

That’s one new vulnerability every 17 minutes

Applications update weekly—or even daily

Cloud infrastructure scales dynamically

New endpoints and APIs appear constantly

Now compare that to how manual pentesting works.

Traditional Manual Pentesting Model

| Metric | Manual Pentesting |

|---|---|

| Engagement length | 2–4 weeks |

| Cost per test | $10,000 – $50,000 |

| Coverage | Snapshot in time |

| Frequency | Once or twice per year |

| Scalability | Very limited |

Manual pentesting gives you a photo, not a live security feed.

The moment the report is delivered:

New code is deployed

New vulnerabilities appear

The assessment starts aging immediately

This creates a catastrophic ratio:

30,000 vulnerabilities : 1 annual assessment

That’s why 88% of organizations suffer breaches from vulnerabilities that were already known but not fixed.

It’s not that security teams don’t care.

It’s that the model simply cannot scale.

2. The Talent Shortage: You Can’t Hire Your Way Out

Even if money wasn’t an issue, there’s another hard limit: people.

According to industry research:

57% of security teams say demand has outpaced available expertise

Skilled pentesters are rare and expensive

Rates often range from $500 to $2,000 per hour

The same experts are reused across dozens of clients

This leads to two major problems:

❌ Cost Inflation

Top-tier pentesting is becoming inaccessible for startups, SMEs, and even mid-sized enterprises.

❌ Bottlenecks & Burnout

Human testers become:

Overworked

Slower

A single point of failure in security programs

And here’s the uncomfortable truth:

70% of a manual pentester’s time is spent on repetitive, pattern-based work

Reconnaissance, scanning, validation, and report formatting.

Agentic AI excels exactly at this layer—freeing humans to focus on what actually requires human reasoning.

3. The Speed Disadvantage: Attackers Already Use AI

While defenders debate whether AI should be trusted…

Attackers already made the decision.

Threat actors now use AI for:

Automated reconnaissance

Credential stuffing

Endpoint discovery

Vulnerability pattern matching

Rapid exploit development

Industry forecasts suggest that fully autonomous attack chains—where AI scans, exploits, and monetizes vulnerabilities without human intervention—are no longer theoretical.

Now compare detection windows:

| Side | Testing Model | Time to Discover |

|---|---|---|

| Your organization | Manual pentest | Months |

| AI-powered attackers | Continuous | Hours |

This isn’t a fair fight.

You’re defending with annual reports

They’re attacking with 24/7 autonomous agents

That asymmetry is why manual-only security programs are failing silently—until breach day.

Part 2: What Agentic AI Actually Is (And Why It Changes Everything)

Not all AI pentesting is created equal.

Traditional scanners follow rigid rules:

Static signatures

Limited context

High false positives

No understanding of business impact

Agentic AI is different.

Agentic systems use autonomous AI agents that can:

Reason about systems

Adapt strategies based on responses

Chain actions together

Learn from previous attempts

Operate continuously without fatigue

Instead of “running a scan,” agentic AI behaves more like a persistent attacker—but for defense.

The Five-Phase Agentic Pentesting Cycle

Phase 1: Discovery

Agentic AI maps your real attack surface:

APIs

Authentication flows

Hidden endpoints

User roles and permissions

Data paths

Unlike static scanners, the AI adapts its discovery process based on what it finds.

Result:

Undocumented endpoints and complex workflows are uncovered automatically.

Phase 2: Intelligent Scanning

Instead of blindly firing payloads:

Agents adjust attack techniques dynamically

Responses influence next actions

Both known and behavioral vulnerabilities are tested

Result:

Faster discovery with significantly fewer false positives.

Phase 3: Exploit Validation

This is where agentic AI truly outperforms automation.

Before reporting an issue, the AI:

Confirms exploitability

Tests real-world impact

Eliminates theoretical findings

Result:

Up to 70–80% reduction in false positives compared to traditional tools.

Phase 4: Exploit Chaining

Agentic AI doesn’t stop at single bugs.

It can:

Combine weak issues into full attack paths

Simulate privilege escalation

Demonstrate business impact

Result:

Developers see real attack scenarios, not abstract risk scores.

Phase 5: Context-Aware Reporting

Findings are:

Ranked by business impact (not just CVSS)

Mapped to affected assets

Paired with remediation guidance

Result:

Actionable security intelligence instead of noise.

Why This Matters for Bug Bounty & Defense

Agentic AI doesn’t replace humans—it multiplies security capability.

Continuous coverage

Massive scale

Attacker-level realism

Zero fatigue

Manual pentesting alone can’t compete with that speed or consistency.

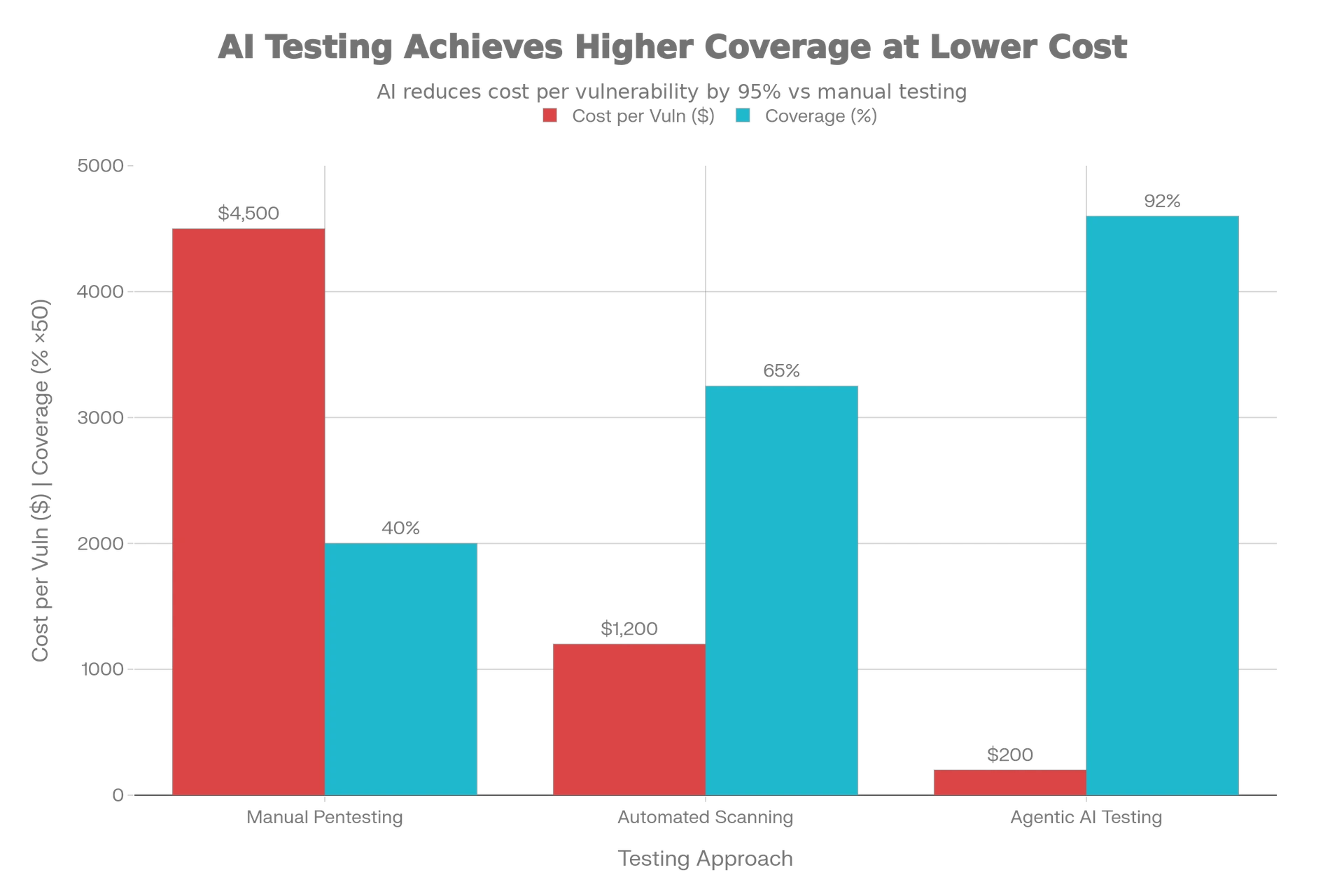

Part 3: The Numbers That Matter — AI vs Manual Pentesting in the Real World

If the theory isn’t convincing enough, the data makes one thing clear:

Agentic AI isn’t experimental anymore — it’s already outperforming manual pentesting at scale.

Adoption Is Accelerating (And Irreversible)

Security teams across industries have already started moving, fast:

97% of organizations are considering or actively adopting AI in penetration testing

9 out of 10 believe AI will become the industry standard

75% of pentesting teams already use AI tools in their workflows

40% of enterprise applications will embed task-specific AI agents by 2026

The takeaway is simple:

Even if you hesitate, your competitors are already gaining ground.

Security maturity is no longer about if you use AI — it’s about how well you use it.

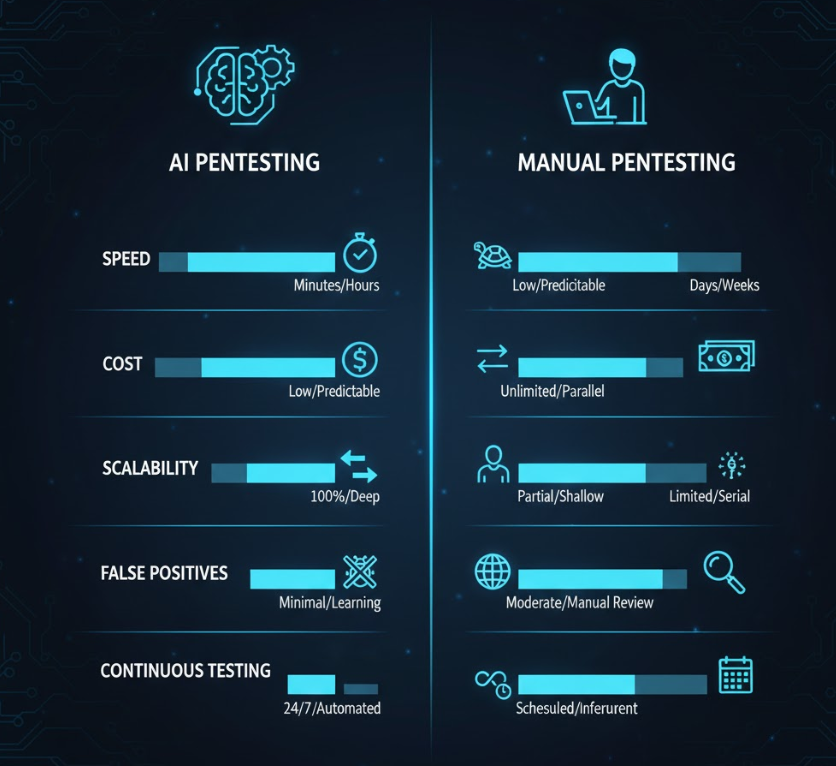

AI vs Manual Pentesting: Performance Comparison

| Dimension | Manual Pentesting | Agentic AI |

|---|---|---|

| Speed | Slow, human-limited | Near-instant, continuous |

| Cost Efficiency | High cost per engagement | Scales at marginal cost |

| Coverage | Partial, sampled | Broad, exhaustive |

| False Positives | Medium–High | Significantly reduced |

| Scalability | Poor | Near-infinite |

| Continuous Testing | ❌ No | ✅ Yes |

Manual pentesting isn’t “bad” — it’s just outpaced.

Real Bug Bounty Performance: AI in Action

The BountyBench Study (2025) introduced the first real framework for measuring AI agents against live bug bounty programs with actual payouts.

Key Results:

| Agent Type | Exploit Success Rate | Patch Success Rate | Total Bounties Completed |

|---|---|---|---|

| Claude 3.7 Sonnet | 67.5% | 60% | $11,285 |

| OpenAI Codex CLI | 32.5% | 90% | $28,635 |

| Gemini 2.5 | 40% | 45% | $3,832 |

Critical Insight:

When agents were provided with CWE context, they completed 75% more detection tasks, totaling $10,275 in additional bounties.

This proves something important for both defenders and bug bounty hunters:

AI doesn’t just scan — it learns, adapts, and improves with context.

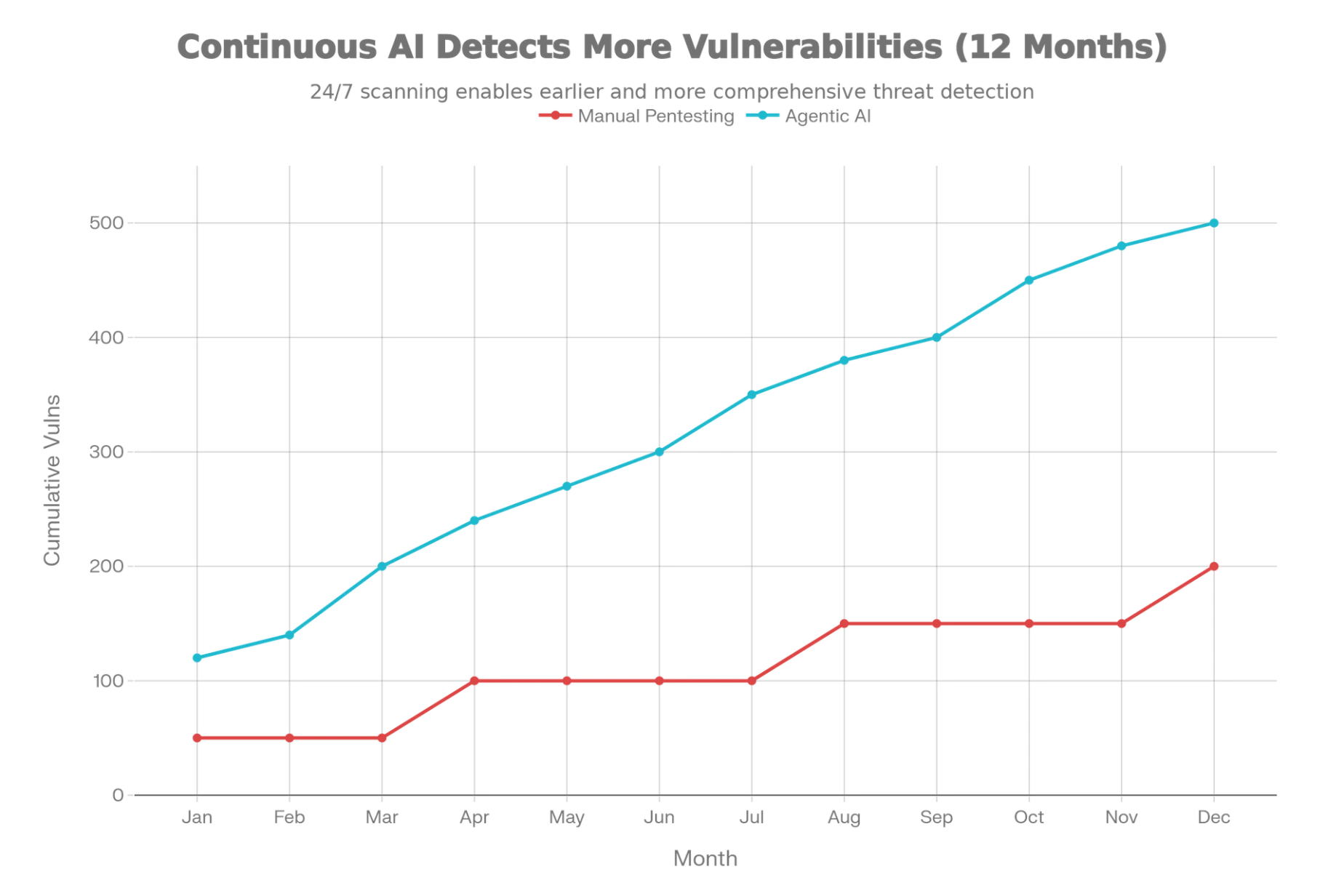

The Time-to-Exploit Gap Is the Real Risk

Industry forecasts suggest AI will cut time-to-exploit in half by 2027.

That creates a brutal asymmetry:

| Actor | Detection Model | Time Window |

|---|---|---|

| Your organization | Annual pentest | Months |

| AI-powered attackers | Continuous automation | Hours |

This isn’t just a speed issue.

It’s an existential security gap.

If detection is slow, exploitation becomes inevitable.

Part 4: Key Capabilities Manual Pentesting Can’t Match

Even the best human pentester is limited by biology.

Agentic AI isn’t.

1. True 24/7 Operation

AI agents don’t:

Sleep

Take vacations

Get burned out

Miss alerts at 3 AM

They operate continuously, scanning production environments while your application evolves.

This alone eliminates the “snapshot” problem that makes manual reports obsolete days after delivery.

2. Simultaneous Multi-Vector Testing

A human tester explores one attack path at a time.

Agentic AI explores thousands in parallel:

Authentication bypass

Authorization flaws

Injection vectors

Business logic paths

Privilege escalation chains

What would take humans weeks or months, AI agents evaluate in hours.

3. Adaptive Learning From Every Test

Each interaction teaches the AI more about:

Application behavior

Error handling

Role boundaries

Data exposure patterns

This means:

Later tests become smarter

False positives drop over time

Exploits become more precise

Manual pentesting resets after every engagement.

Agentic AI compounds intelligence.

4. Business-Context-Aware Risk Prioritization

Not all vulnerabilities matter equally.

Agentic AI understands this.

Instead of blindly ranking by CVSS:

An IDOR in a low-risk endpoint is deprioritized

The same flaw in payment processing is elevated immediately

This drastically improves:

Developer trust

Fix prioritization

Security ROI

Security becomes impact-driven, not noise-driven.

5. Automated Exploit Chaining

This is where agentic AI becomes genuinely dangerous — in a good way.

AI can:

Combine weak findings

Build full attack paths

Demonstrate real-world compromise

Humans can do this too — but it takes time, creativity, and energy.

AI does it at scale, repeatedly, without fatigue.

What This Means for Bug Bounty Hunters

For ethical hackers, the message isn’t “you’re obsolete.”

It’s this:

Manual-only hacking is no longer competitive.

Top performers are already:

Using AI to accelerate recon

Automating repetitive testing

Saving human creativity for high-impact logic flaws

The future belongs to AI-augmented hackers, not AI-replaced ones.

Part 5: Tools Reshaping the Bug Bounty and Pentesting Industry in 2026

The rise of agentic AI isn’t theoretical—it’s already reshaping the tools security teams and bug bounty hunters rely on daily. A new generation of platforms has emerged, designed not just to scan, but to think, adapt, and exploit like real attackers.

Below are the categories and platforms driving this transformation.

Leading Agentic AI Pentesting Platforms (2026)

These tools represent a fundamental shift away from static scanners and point-in-time testing.

Aikido Security

Aikido consistently ranks at the top in head-to-head evaluations against both traditional pentesting firms and legacy scanners.

Why it stands out:

Simulates real attacker behavior across applications, APIs, cloud, and containers

Automatically chains vulnerabilities into full attack paths

Delivers deep, continuous coverage with consistent results

Aikido demonstrates what happens when AI is designed from the ground up for offensive security—not retrofitted.

Escape Tech

Escape focuses on the hardest class of vulnerabilities to detect: business logic flaws.

Key strengths:

AI-driven workflow analysis

Detection of logic issues manual pentesting often misses

Strong performance in complex, multi-step application flows

This makes it particularly valuable for fintech, SaaS, and e-commerce platforms.

Terra Security

Terra represents the rise of AI-powered Pentesting-as-a-Service (PTaaS).

What makes it different:

Autonomous AI agents for continuous testing

Human experts step in for validation and advanced exploitation

Hybrid delivery model gaining traction in large enterprises

This model aligns well with compliance-heavy industries.

ZeroThreat AI

ZeroThreat emphasizes scale and ease of integration.

Core capabilities:

40,000+ threat intelligence database

CI/CD pipeline integration

Continuous scanning without agent installation

Ideal for teams prioritizing minimal operational overhead.

Qualys

A well-established name evolving into the AI era.

Strengths:

Real-time asset discovery

Continuous vulnerability management

Integrated patching and response workflows

While not purely agentic, it shows how legacy platforms are adapting.

ioSENTRIX

ioSENTRIX explicitly embraces the hybrid philosophy.

Approach:

AI accelerates reconnaissance and validation

Certified humans handle exploitation and logic testing

AI speeds the process; humans provide final judgment

This balance reflects where the industry is heading.

What This Means for Bug Bounty Hunters

The tool landscape tells a clear story:

Manual-only workflows are no longer competitive

High-performing hackers now augment their skills with AI

Recon, enumeration, and validation are increasingly automated

Bug bounty success in 2026 depends less on raw hours and more on strategic intelligence amplification.

Part 6: The Uncomfortable Truth — Why the Hybrid Model Is Winning

Despite the hype, agentic AI has not “replaced” human pentesters.

And it likely won’t—at least not entirely.

The real winners in 2026 are organizations that understand where AI excels and where humans remain irreplaceable.

What Agentic AI Still Cannot Do (Yet)

1. Deep Business Logic Reasoning

AI can detect anomalies, but understanding intent remains difficult.

Example:

An application uses timestamps instead of random values for CSRF tokens.

AI may flag it—but a human understands why that architectural decision is dangerous.

This kind of insight still requires human judgment.

2. Highly Creative, Multi-Stage Attack Chains

Some attacks demand intuition and lateral thinking.

Real-world insight:

Nearly 80% of human testers identified a critical RCE in a widely studied IoT platform that AI agents entirely missed.

Novel exploitation paths still favor human creativity.

3. Zero-Day Discovery

Agentic AI performs best against:

Known vulnerability classes

Recognizable patterns

Documented weaknesses

True zero-days often require:

Reverse engineering

Custom exploit development

Deep protocol understanding

Humans remain essential here.

4. Social Engineering and Human Manipulation

AI can assist, but it cannot fully replace:

Psychological manipulation

Cultural awareness

Real-time human interaction

Voice phishing, pretexting, and physical-world attacks still depend heavily on human intelligence.

Why the Hybrid Model Is Dominating

The most effective security programs no longer ask:

“Manual or AI?”

They ask:

“How do we combine them intelligently?”

The Winning Formula in 2026

| Capability | Best Owner |

|---|---|

| Continuous scanning | Agentic AI |

| Large-scale coverage | Agentic AI |

| Vulnerability validation | Agentic AI |

| Business logic flaws | Humans |

| Zero-day research | Humans |

| Social engineering | Humans |

AI provides breadth and speed.

Humans provide depth and meaning.

Together, they create a security posture that neither could achieve alone.

Compliance and Insurance Are Forcing the Shift

This hybrid approach isn’t optional anymore.

Cyber insurance underwriters now demand hybrid assessment reports

Automated scan results alone are no longer accepted

PCI DSS Requirement 11.3 explicitly requires manual validation

Organizations relying solely on automation increasingly fail audits—not because AI is bad, but because context matters.

The Real Death Isn’t Manual Pentesting — It’s Manual-Only Pentesting

Manual pentesting isn’t disappearing.

But manual-only pentesting is.

In 2026, security programs that ignore agentic AI aren’t being cautious—they’re being outpaced.

Part 7: What This Shift Means for Bug Bounty Hunters

For individual hackers and bug bounty hunters, agentic AI isn’t a threat—it’s a force multiplier.

The biggest change in 2026 is not who finds bugs, but how fast and intelligently they do it.

The Old Bug Bounty Model

Manual recon

Repetitive endpoint testing

Time-consuming validation

Burnout from low-signal findings

The Modern Bug Bounty Model

AI-assisted reconnaissance

Automated pattern discovery

Faster validation loops

Humans focus on logic flaws and creative exploitation

Top-performing hunters are no longer the ones who work the longest hours—they’re the ones who use AI strategically.

Skills That Matter More Than Ever

Understanding business logic

Chaining vulnerabilities creatively

Knowing when not to trust automation

Interpreting AI output intelligently

The takeaway is clear:

AI won’t replace bug bounty hunters.

But bug bounty hunters who ignore AI will be replaced by those who don’t.

Part 8: How Organizations Must Adapt Their Security Strategy in 2026

Organizations that succeed in 2026 follow a very different security playbook than those stuck in the past.

The Losing Strategy

Annual manual pentests

Static PDF reports

Reactive patching

No continuous visibility

The Winning Strategy

Continuous agentic AI testing

Manual validation for high-impact findings

Risk prioritization based on business impact

Security integrated into CI/CD

Here’s what modern security maturity looks like:

| Security Capability | Modern Approach |

|---|---|

| Vulnerability discovery | Continuous AI-driven |

| Exploit validation | Agentic AI + humans |

| Business logic testing | Human-led |

| Compliance readiness | Hybrid reports |

| Time-to-remediation | Measured in days, not months |

Security is no longer an event—it’s a living system.

Organizations that adapt early gain:

Faster detection

Lower breach probability

Reduced insurance premiums

Stronger customer trust

Those that don’t adapt?

They accumulate invisible risk—until it explodes.

Part 9: The Future of Pentesting Is Continuous, Intelligent, and Hybrid

Let’s be precise about what’s actually “dying.”

It’s not human expertise.

It’s not ethical hacking.

It’s not deep technical skill.

What’s dying is the belief that manual-only, point-in-time pentesting is enough.

The New Reality

AI provides speed, scale, and persistence

Humans provide intuition, creativity, and context

Together, they form the only model that works at modern scale

This hybrid approach is already becoming the baseline expectation, not the exception.

Security leaders, insurers, regulators, and attackers have all moved on.

The only remaining question is whether you have.

Part 10: Final Thoughts — Adapt or Be Outpaced

The death of manual pentesting isn’t a headline designed to provoke fear.

It’s a warning grounded in data, economics, and reality.

In 2026:

Attackers use AI

Defenders must do the same

Speed matters more than perfection

Continuous testing beats annual confidence

Manual pentesting still matters—but only when paired with agentic AI.

Those who embrace this shift gain visibility, resilience, and control.

Those who resist it inherit blind spots.

And in cybersecurity, blind spots are where breaches begin.

Frequently Asked Questions (FAQ)

Is manual pentesting really dead in 2026?

No, manual pentesting is not dead, but manual-only pentesting is no longer sufficient. In 2026, the scale and speed of modern attack surfaces require continuous testing. Manual pentesting still plays a critical role in business logic flaws, zero-day research, and creative exploitation—but it must be combined with agentic AI to remain effective.

What is agentic AI in penetration testing?

Agentic AI refers to autonomous AI agents that can independently discover, test, validate, and chain vulnerabilities without human supervision. Unlike traditional scanners, agentic AI adapts its strategy, learns from responses, and behaves more like a real attacker—making it far more effective for modern pentesting and bug bounty workflows.

How is agentic AI different from automated vulnerability scanners?

Traditional scanners rely on static rules and signatures, often generating high false positives. Agentic AI uses reasoning, adaptive learning, and exploit validation to confirm real-world impact. This results in fewer false positives, deeper coverage, and actionable findings instead of noisy reports.

Can AI replace human pentesters completely?

No. AI cannot fully replace human pentesters—especially for:

Business logic vulnerabilities

Zero-day discovery

Complex exploit chains

Social engineering attacks

The most effective security programs in 2026 use a hybrid model, where AI handles scale and humans handle creativity and context.

Is agentic AI effective for bug bounty hunting?

Yes. Many top bug bounty hunters now use AI to:

Automate reconnaissance

Identify vulnerability patterns

Speed up validation

This allows humans to focus on high-impact findings. AI-assisted hunters consistently outperform manual-only hunters in both speed and earnings.

Does AI reduce false positives in pentesting?

Yes. Agentic AI validates exploitability before reporting vulnerabilities. Studies show 70–80% fewer false positives compared to traditional automated tools, making reports more trustworthy for developers and security teams.

Are organizations really adopting AI for pentesting?

Yes. Industry data shows:

97% of organizations are considering or using AI in pentesting

75% of pentesting teams already use AI tools

9 out of 10 believe AI will become the industry standard

Adoption is no longer optional—it’s competitive necessity.

Is agentic AI compliant with security standards like PCI DSS?

AI alone is not sufficient for compliance. Standards like PCI DSS Requirement 11.3 require manual validation. This is why hybrid pentesting—AI plus human expertise—is now the preferred and compliant approach.

Does agentic AI increase security costs?

In most cases, no. While initial adoption requires investment, agentic AI reduces long-term costs by:

Lowering breach risk

Reducing manual testing hours

Catching vulnerabilities earlier

Improving remediation speed

Many organizations report significant ROI within the first year.

Should beginners in cybersecurity learn AI-assisted pentesting?

Absolutely. Beginners who understand how to work with AI tools, not against them, gain a massive advantage. Learning AI-assisted recon, validation, and analysis is quickly becoming a core skill for modern pentesters and bug bounty hunters.

Where can I learn more about AI-powered pentesting and bug bounty skills?

You can learn through hands-on practice, community discussions, and curated resources.

Follow Bugitrix.com and join the Bugitrix Telegram community for free learning materials, tools, and real-world cybersecurity insights.

Learn Faster. Hack Smarter. Stay Ahead.

If you’re a:

Bug bounty hunter

Cybersecurity student

Pentester

Security engineer

Or just passionate about offensive security

👉 Join the Bugitrix Telegram channel for free resources, tools, and real-world insights

👉 Follow Bugitrix on social media to stay ahead of emerging attack and defense trends

The future of hacking isn’t manual or automated.

It’s intelligent.

—