Imagine this. You are sitting at your desk on a Tuesday afternoon. Your phone rings. It is your CEO. You recognize the voice instantly — the tone, the slight accent, the way they say your name. They need you to wire $240,000 to a vendor account. It is urgent. Confidential. Do not loop in anyone else just yet.

You do it.

Two days later, you find out your CEO never called. They were in a meeting the entire time. The voice you heard was a machine — and that machine just cost your company a quarter of a million dollars.

This is not fiction. This is deepfake phishing, and it is happening right now, in 2026, at companies of every size, in every industry, across every country.

What Exactly Is Deepfake Phishing?

Most people know phishing as those suspicious emails asking you to click a link and "verify your account." But phishing has evolved dramatically. Attackers are no longer just sending dodgy emails. They are cloning voices, generating fake video calls, and using AI to impersonate the exact person you trust most — your boss, your CFO, your IT manager, even your spouse.

Deepfake phishing combines two powerful technologies:

- AI voice cloning — creating a synthetic, near-perfect replica of a real person's voice using just a few minutes of audio

- Social engineering — manipulating human psychology to make you do something you would not normally do

When these two forces combine, the result is one of the most dangerous cyberattacks the world has ever seen.

Why This Is Exploding in 2026

A few years ago, creating a convincing fake voice required expensive software, skilled engineers, and hours of processing time. Today, free tools can clone a voice using as little as three seconds of audio. That audio can come from anywhere — a YouTube interview, a LinkedIn video, a podcast appearance, an earnings call. If your boss has ever spoken publicly online, their voice is already potentially in the hands of bad actors.

The numbers are staggering:

| Year | Reported Voice Clone Fraud Losses | Growth Rate |

|---|---|---|

| 2021 | $6.8 million | — |

| 2022 | $11.4 million | +67% |

| 2023 | $25.9 million | +127% |

| 2024 | $68 million | +162% |

| 2025 | $190+ million (estimated) | +176% |

Source: FBI IC3 Reports + Cybersecurity Ventures estimates

The technology barrier is essentially gone. What used to require a nation-state budget now costs zero dollars and takes fifteen minutes to set up.

Real-World Case Studies That Will Shock You

🏦 Case 1: The $35 Million Bank Heist — UAE, 2021

This is one of the most documented voice clone attacks in history. A branch manager of a UAE bank received a phone call from someone who sounded exactly like the company director he worked with regularly. The "director" explained that the company was finalizing a major acquisition and needed $35 million transferred immediately.

The manager had spoken to this director many times. The voice was identical. The confidence was identical. Everything checked out — except the person on the other end was an AI.

The attack worked. Investigators later found that attackers had used deepfake audio software to clone the director's voice from previous calls and public recordings. A total of $35 million was moved across multiple accounts before the fraud was discovered.

🏢 Case 2: The "CFO" Who Was Never There — Hong Kong, 2024

A finance worker at a multinational firm received an email from what appeared to be the company's CFO, asking him to join a video conference call. During that call, he saw the CFO on screen along with several other "colleagues." They discussed a confidential transaction. He was asked to execute 15 transfers totaling $25 million HKD (~$3.2 million USD).

Every single person on that video call — except the victim — was an AI-generated deepfake.

This case sent shockwaves through the cybersecurity community because it demonstrated that attacks had evolved beyond voice. Now, full video deepfakes were being used in real-time meetings.

📞 Case 3: The Energy CEO Clone — Europe, 2019

This is the attack that first put voice cloning fraud on the map. The CEO of a UK-based energy firm received a phone call from what sounded exactly like his parent company's German CEO — the accent, the cadence, the slight speech patterns were all perfect.

The caller instructed him to transfer €220,000 to a Hungarian supplier immediately. The UK CEO complied. The money was moved. The German CEO had never made that call.

Investigators traced it back to an Eastern European cybercriminal group that had used publicly available AI audio tools to clone the voice.

How Hackers Actually Do This: The Technical Breakdown

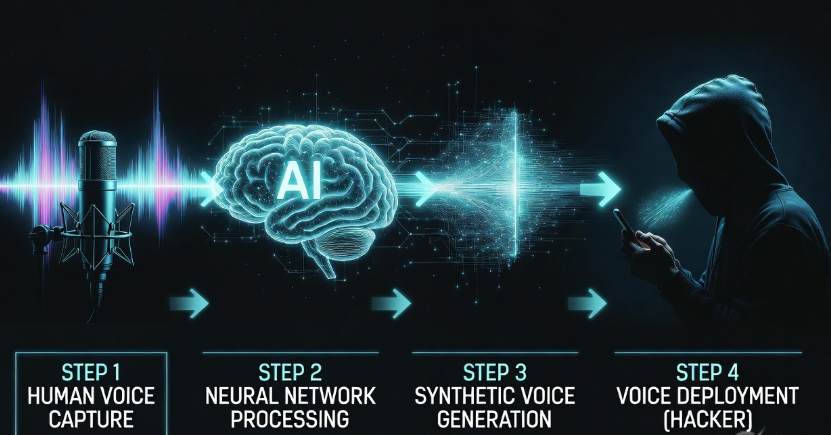

You do not need to be a technical expert to understand this. Here is the step-by-step process attackers follow:

Step 1: Target Identification

The attacker picks a target — usually a company with public information available. They identify a high-authority figure (CEO, CFO, IT Director) whose voice they want to clone.

Step 2: Voice Sample Collection

They collect audio samples from:

- YouTube interviews or keynotes

- Podcast appearances

- Earnings calls (often publicly posted)

- LinkedIn and Twitter video posts

- Company promotional videos

As little as 3–10 seconds of clean audio is enough for modern tools to work with. More audio equals higher quality cloning.

Step 3: Model Training

Using tools like ElevenLabs, Resemble AI, VALL-E, or even open-source alternatives, the attacker trains a voice model on the collected audio. The tool learns the unique vocal characteristics of the target.

Step 4: Script Generation

The attacker writes a script for the call — usually involving urgency, secrecy, and authority. Something like: "This is [CEO Name]. I need you to handle something urgently. Do not send an email about this. I will explain why later."

Step 5: The Call

The synthetic voice delivers the script in real-time or as a pre-recorded message. Modern tools can even handle live voice conversion — meaning a human attacker speaks, and the AI transforms their voice into the target's voice in real time, allowing for interactive conversations.

Step 6: Follow-Up Social Engineering

Often, a fake email (spoofed or from a look-alike domain) follows the call to provide "written confirmation," increasing the victim's confidence that everything is legitimate.

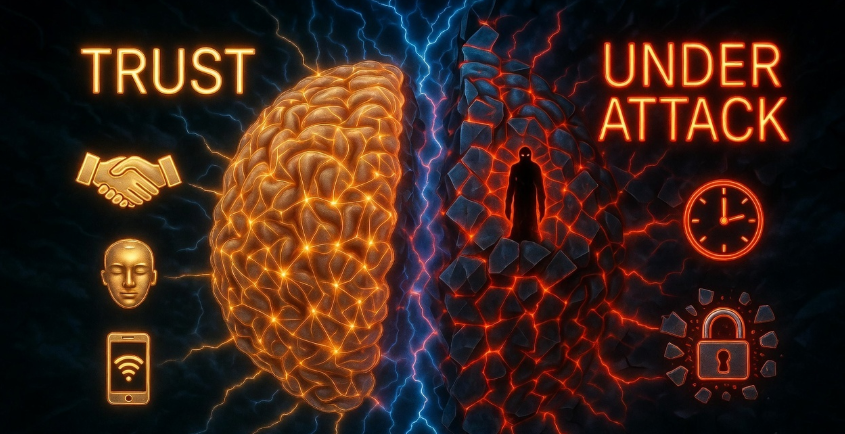

The Psychology Behind Why It Works

Here is the uncomfortable truth: this is not really a technology problem. It is a human psychology problem.

Attackers exploit deep-rooted psychological triggers:

| Psychological Trigger | How Attackers Use It |

|---|---|

| Authority | The voice of the CEO carries enormous weight. We are conditioned to comply with authority figures. |

| Urgency | "We need this done in the next 30 minutes" shuts down critical thinking. |

| Scarcity | "This opportunity closes today" creates panic-driven decisions. |

| Secrecy | "Do not mention this to anyone yet" isolates the victim from getting a second opinion. |

| Trust | Hearing a familiar voice triggers an emotional connection that bypasses rational analysis. |

Even highly intelligent, experienced professionals fall for this. The CEO of that UK energy company had years of experience managing sensitive transactions. He still fell for it because the attack hit psychological triggers that exist in every human brain.

Who Is Being Targeted?

Voice clone attacks are not random. Attackers are surgical. Here is who ends up in their crosshairs:

High-value internal targets:

- Finance team members with wire transfer authority

- HR personnel with access to payroll systems

- IT administrators with system access credentials

- Executive assistants who manage the CEO's communication

The impersonated personas:

- CEOs and founders

- CFOs and finance directors

- Legal counsel

- IT support (for credential phishing)

- Vendors and third-party partners

Industries under heaviest attack:

- Banking and financial services

- Healthcare (for patient data and insurance fraud)

- Manufacturing (supply chain fraud)

- Technology companies (credential theft)

- Government and defense contractors

The New Threat: Real-Time Deepfake Video

Voice cloning was just the beginning. In 2025 and 2026, attackers have started using real-time video deepfakes — live video manipulation that replaces a person's face and voice simultaneously during a video call.

Tools like Deep-Live-Cam (which went viral on GitHub in 2024) allow someone to use any reference photo to replace their face in real-time video. Combined with voice cloning, an attacker can now:

- Join a Microsoft Teams or Zoom call

- Appear as the company's CEO on screen

- Speak in the CEO's cloned voice

- Conduct a full "meeting" with the victim

This is why the Hong Kong case was so alarming. The victim was looking at what appeared to be a video of colleagues he recognized. Every frame was generated by AI.

How to Detect a Deepfake Voice or Video Call

Training your brain — and your team — to spot red flags is your first line of defense.

Red Flags in Voice Calls

- Unusual request pattern: The real CEO would normally send an email first, not call directly for a financial transaction

- Insistence on secrecy: Legitimate business transactions do not require bypassing normal approval chains

- Extreme urgency: Pressure to act within minutes is almost always a manipulation tactic

- Slightly unnatural pauses: AI voices sometimes have unnatural breathing patterns or gaps

- Cannot answer personal verification questions: Try asking something only the real person would know

- Call from an unknown number: Even if the Caller ID shows a known number, it can be spoofed

Red Flags in Video Calls

- Blurry or glitchy face edges: Deepfake videos often have visible artifacts around the hairline and jaw

- Eyes that do not blink naturally: AI-generated faces sometimes have irregular blink patterns

- Lighting inconsistencies: The face may appear to have different lighting than the background

- Audio-video sync issues: Lips may not perfectly match the audio

- Inability to perform unexpected movements: Ask them to turn sideways or hold up fingers — deepfakes struggle with sudden unexpected actions

How Organizations Can Defend Against This

This is where the real work happens. Defense is not just about technology — it is about building a culture where verification is normal, not insulting.

1. Implement a Voice/Video Callback Protocol

Never act on financial instructions received via phone or video call alone. Always verify through a second, independent channel — call the person back on a number you already have stored, not one they provide during the suspicious call.

2. Create Code Words for High-Value Requests

Some companies now use secret verification phrases — a word or short phrase that both parties know, used to confirm identity during unexpected calls. This is particularly effective for executive teams.

3. Require Multi-Party Approval for Wire Transfers

No single employee should have the authority to execute large wire transfers alone. A dual-approval process — where at least two people must authorize a transaction — eliminates the single point of failure that attackers exploit.

| Transfer Amount | Approval Required |

|---|---|

| Under $10,000 | Single manager approval |

| $10,000 – $50,000 | Manager + Finance Director |

| $50,000 – $500,000 | CFO + CEO dual approval |

| Over $500,000 | Board-level sign-off required |

4. Train Employees Regularly

Cybersecurity awareness training is not a one-time event. Run regular simulations — including fake voice clone attempts — so employees build muscle memory for skepticism. Make verification a habit, not an insult.

5. Use AI Detection Tools

Several tools now exist specifically to detect synthetic audio and video:

| Tool | What It Detects | Best For |

|---|---|---|

| Pindrop | Synthetic voice and phone fraud | Call centers, banks |

| Microsoft Azure Content Safety | Deepfake audio/video | Enterprise teams |

| Sensity AI | Deepfake video content | Media, HR, legal |

| Resemble Detect | AI-generated audio | Security teams |

| Intel FakeCatcher | Real-time video deepfakes | High-security orgs |

6. Lock Down Public Audio and Video Exposure

Audit how much of your leadership team's voice and video is publicly available. While you cannot remove everything, being aware of what exists helps you assess risk. Brief your executive team on this threat directly.

7. Update Your Incident Response Plan

When — not if — a deepfake attack hits your organization, you need a plan. Who do you call? What do you freeze? How do you contain the damage? This needs to be documented and tested before an attack happens.

What to Do If You Think You Have Been Targeted

If you receive a suspicious call or have already acted on instructions that now feel wrong, do the following immediately:

- Do not panic, but do act fast — time matters in financial fraud recovery

- Call your bank immediately — wire transfers can sometimes be recalled within hours

- Report to your IT/Security team — they need to know an attack occurred

- Do not delete anything — preserve the call records, emails, and any evidence

- File a report with law enforcement — in the US, report to the FBI's IC3 at ic3.gov

- Notify your legal team — there may be disclosure obligations depending on your industry

Recovery windows are narrow. The faster you act, the better your chances of recovering funds.

The Bigger Picture: AI as a Weapon

Deepfake phishing is just one face of a much larger transformation happening in cybersecurity. Artificial intelligence has fundamentally shifted the power balance between attackers and defenders. For decades, the advantage was with defenders — attacks were predictable, detectable, and required significant skill to execute.

That advantage is eroding. Today, AI gives attackers:

- Scale — launch thousands of personalized attacks simultaneously

- Sophistication — craft attacks that are nearly indistinguishable from legitimate interactions

- Speed — compress months of preparation into hours

- Accessibility — make advanced attack methods available to anyone, regardless of skill level

This is not cause for despair. It is cause for urgency. The organizations and individuals who take this seriously now — who invest in education, protocols, and culture — will be far better positioned than those who wait for it to happen to them.

🛡️ Free Resource: Deepfake Attack Response Playbook for Teams

To help your organization get prepared, we have put together a Deepfake Attack Response Playbook — a practical, step-by-step guide your security team and employees can use immediately.

The playbook covers:

- How to set up a verification protocol in under one hour

- Script templates for suspicious call handling

- Employee training checklist for voice clone awareness

- Incident response flowchart for when an attack is suspected

- Vendor contact list for deepfake detection tools

👉 Get the playbook and start building your defenses at bugitrix.com

Final Thoughts: Healthy Skepticism Is Not Rudeness

Here is the cultural shift that every organization needs to make: verifying identity is not insulting. It is professional.

If your CEO calls you and you say, "Just to confirm, can I reach you back on your office line in two minutes?" — a real CEO will respect that. They will understand. They might even be proud of you.

An attacker will push back. They will create urgency. They will make you feel like verification is wasting precious time.

That reaction — the pressure to skip verification — is your most reliable detector of fraud.

Trust your instincts. Slow down when someone tells you to hurry. Ask questions when someone tells you not to. Verify before you act. These simple habits are worth more than any AI detection tool on the market.

The machines are getting better at sounding human. The best defense is humans getting better at thinking critically.

Stay Ahead of the Threat — Join the Bugitrix Community

Deepfake phishing is one of dozens of emerging threats we break down in simple, actionable language every week. If you want to stay sharp and ahead of attackers, here is where to go:

🔐 Daily Cybersecurity Tips, Tricks & Breaking News Join thousands of security-minded people on our Telegram channel for real-time updates, threat alerts, and tutorials delivered straight to your phone. 👉 t.me/bugitrix

💬 Connect With Hackers, Defenders & Learners You do not have to navigate cybersecurity alone. Our community forum is full of people at every level — from complete beginners to seasoned professionals — ready to help, share knowledge, and grow together. 👉 bugitrix.com/forum/help-1

🎓 Get Personalized Mentorship Want to take your skills seriously and build a real career in cybersecurity? Apply for one-on-one mentorship with experienced professionals who have been exactly where you are. 👉 Apply for Mentorship

📄 Build a Resume That Gets You Hired Breaking into cybersecurity is hard without the right resume. We help you showcase your skills, certifications, and projects in a way that makes hiring managers take notice. 👉 Build Your Resume With Us

🌐 Explore More Blogs, Tools & Resources For more deep dives like this one — covering AI threats, ethical hacking, bug bounty, penetration testing, and everything in between — head to the home of everything cybersecurity. 👉 bugitrix.com

Think someone you know needs to read this? Share it with your team, your family, or anyone who has ever answered a phone call from their boss. The more people who understand this threat, the harder it becomes for attackers to succeed.

Stay safe. Stay skeptical. Stay sharp.

— The Bugitrix Team