There is a war happening inside bug bounty programs right now. And the enemy is not a nation-state hacker, not a zero-day exploit, not even a rogue insider. The enemy is a copy-paste AI report written in three seconds by someone who has never opened Burp Suite in their life.

Bug bounty platforms like HackerOne and Bugcrowd have become the internet's most trusted pipeline for discovering real vulnerabilities. Companies pay millions in rewards every year. Ethical hackers spend weeks, sometimes months, chasing a single critical bug. The system works because it is built on trust, effort, and genuine skill.

But that trust is cracking.

AI tools — particularly large language models — are being weaponized to mass-generate fake, low-quality, and hallucinated vulnerability reports. Triage teams are drowning. Real hackers are getting buried. Program managers are burning out. And the entire ecosystem is being quietly poisoned.

This is not a future problem. It is happening right now.

What Is Actually Going On?

To understand the crisis, you need to understand how a bug bounty report works.

A hacker finds a vulnerability. They document it carefully — steps to reproduce, proof of concept, impact assessment, screenshots, sometimes a video walkthrough. They submit it to a program. A triage team reviews it, tries to reproduce it, classifies its severity, and either rewards or rejects it.

This process depends on one thing: the report being real.

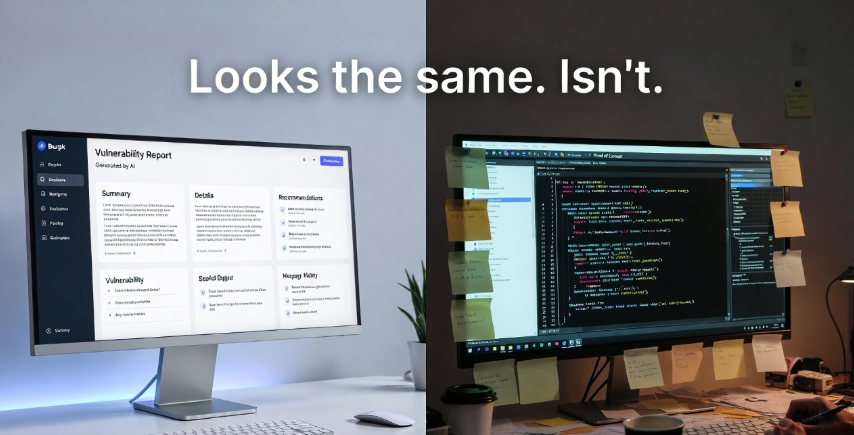

Now imagine someone opens ChatGPT, types "write me a bug bounty report for an IDOR vulnerability in a web application," copies the output word for word, changes the company name, and submits it to ten programs in an hour.

The AI writes a beautiful, technically convincing, completely fabricated report. Professional language. CVSS score included. Recommended remediation steps. Looks legitimate at first glance.

But when the triage team tries to reproduce it? Nothing. The endpoint does not exist. The parameter behaves completely differently. The "vulnerability" was hallucinated by a model that has no idea what the actual application looks like.

This is AI bug bounty abuse, and it is one of the fastest-growing problems in cybersecurity right now.

The Numbers Are Telling a Story

HackerOne's annual Hacker-Powered Security Report has tracked submission volumes for years. Bugcrowd's annual surveys reflect similar trends. While neither platform has released a specific "AI-generated fake report" statistic publicly, triage teams and program managers across the industry have started speaking openly about what they are seeing.

Here is what the community is reporting:

| Metric | Before AI Tools (2022) | After AI Tools (2024–2025) |

|---|---|---|

| Average triage time per report | 4–6 hours | 8–14 hours |

| Duplicate/invalid report rate | ~30–35% | ~50–65% (estimated) |

| Reports submitted by new accounts | Moderate | Significant spike |

| Reports with zero reproduction steps | Low | Sharply increasing |

| Burnout reports from triage staff | Occasional | Increasingly common |

These numbers are not official. They come from public forum discussions, Twitter/X threads, Discord conversations in hacker communities, and firsthand accounts from program managers. But the pattern is consistent enough that it cannot be dismissed.

When the signal-to-noise ratio drops this dramatically, real bugs get missed. Real hackers get delayed. And real security stays unfixed.

Who Is Actually Doing This?

Here is an uncomfortable truth: most people submitting AI-generated fake reports are not experienced hackers. They are beginners — sometimes teenagers — who watched a YouTube video about bug bounty, downloaded a free AI tool, and genuinely believed they could make money overnight.

No hands-on skills. No lab practice. No understanding of how web applications actually work. Just an AI prompt and a copy-paste.

This is not entirely their fault. The bug bounty industry has been aggressively marketed as "easy money for hackers." Influencers post screenshots of $10,000 payouts. Courses promise you will find bugs in thirty days. The hype is enormous, the expectation is unrealistic, and the result is a flood of unqualified submissions.

But there is also a smaller, more cynical group: experienced people who know exactly what they are doing. They use AI to scale their reporting — submitting dozens of marginally different reports for the same class of issue, hoping one slips through, or gaming reputation systems to build credibility before landing a legitimate reward.

Both groups are damaging the ecosystem. For different reasons, with different levels of intent, but the result is the same.

The Other Side: AI as a Legitimate Hacking Tool

Before we demonize AI entirely, let us be honest about something. AI is also genuinely changing security research — for the better.

Experienced researchers are using large language models to:

- Analyze large codebases for patterns that might indicate vulnerabilities

- Generate payloads and test variations faster

- Understand unfamiliar frameworks or legacy code

- Draft initial report structures that they then fill with real findings

- Summarize lengthy API documentation before testing

This is not abuse. This is a professional using a tool intelligently. The difference between a legitimate AI-assisted report and a fake AI-generated report is the same difference between a carpenter using a power drill and someone using a power drill to randomly drill holes in a wall hoping to hit something.

The tool is neutral. The skill, the intent, and the verification behind it are what matter.

Here is a simple comparison to illustrate the difference:

| Factor | Legitimate AI-Assisted Report | Fake AI-Generated Report |

|---|---|---|

| Was the bug actually found? | Yes, by manual/automated testing | No, hallucinated |

| Reproduction steps | Real, verified, detailed | Vague or impossible |

| Proof of concept | Exists (screenshot, PoC code, video) | Missing or fabricated |

| Understanding shown | Deep, contextual | Surface-level, generic |

| Time invested | Hours to weeks | Minutes |

| Report language | May be AI-polished, but accurate | AI-generated, inaccurate |

The moment you submit a report without verifying the vulnerability yourself, you have crossed a line. It does not matter how polished your report sounds.

What Are Bug Bounty Platforms Doing About It?

HackerOne and Bugcrowd are aware of the problem. Both platforms have made statements about report quality and have implemented or discussed various countermeasures.

HackerOne has introduced reputation scoring systems that penalize low-quality submissions. Hackers with high rates of invalid reports can be removed from programs or have their earnings held. Their triage as a service offering helps companies handle volume, but that also means humans are still doing the work of filtering out junk.

Bugcrowd has leaned into their CrowdMatch technology and signal-based ranking to surface higher-quality researchers to programs. They have also updated their terms of service to address automated submission abuse.

Both platforms are exploring AI detection tools — ironic but logical — to identify AI-generated reports. The challenge is that good AI-generated text is hard to detect reliably. False positives risk penalizing legitimate researchers who write well or use AI for grammar assistance.

Some private programs have started requiring video proof-of-concept as a submission requirement. Others have added CAPTCHA-like challenges or mandatory researcher verification steps.

None of these solutions are perfect. All of them add friction for everyone, including the honest researchers.

The Real Damage Being Done

Let us talk about the actual victims here, because they are often invisible in this conversation.

Triage teams are burning out. Reviewing a fake report takes almost as long as reviewing a real one — sometimes longer, because you have to try to reproduce something that does not exist before you can close it. These are often underpaid, overworked security professionals who got into this field because they love security, not because they wanted to spend their days reading AI-generated paragraphs about IDOR vulnerabilities that do not exist.

Real hackers are being delayed and demoralized. When your legitimate critical finding sits in a queue for three weeks because the triage team is buried in junk, you lose faith in the system. Some of the best researchers are quietly pulling back from public programs, moving to invitation-only programs, or leaving bug bounty altogether.

Companies are paying for triage infrastructure that is increasingly consumed by noise. Every dollar spent reviewing a fake report is a dollar not spent fixing a real vulnerability.

Beginner hackers with integrity are getting caught in the crossfire. If you are new, learning, and submitting imperfect but honest reports, you are competing for triage attention against a flood of AI spam. Your legitimate learning journey is being disrupted by people gaming the same system you are trying to break into legitimately.

Should AI-Generated Reports Be Banned?

This is the question the community is actually arguing about right now. And there is no clean answer.

The case for banning them: If you cannot reproduce a vulnerability without AI telling you what it is, you should not be submitting a report. Bug bounty programs are not AI testing grounds. They are professional security research pipelines. Standards matter. Quality matters. The damage being done to triage teams is real and measurable.

The case against an outright ban: How do you enforce it? AI detection is unreliable. You risk penalizing good researchers who use AI as a writing tool. The problem is not AI itself — it is the abuse. Banning the tool does not ban the intent.

The middle-ground view: What needs to happen is better verification requirements — proof of concept videos, lab reproduction evidence, researcher identity verification — combined with serious reputation penalties for serial invalid submitters. The bar for entry should go up, not the tools used should be restricted.

The bug bounty community tends to agree on one thing: the problem is not going away on its own. Platforms, companies, and researchers need to collectively raise the floor on what counts as a valid submission.

What Real Hackers Can Do Right Now

If you are a serious bug bounty hunter — whether you are just starting out or already pulling in consistent payouts — here is how you protect your reputation and contribute to fixing this problem:

1. Never submit without reproducing. This sounds obvious, but it needs to be said loudly. If you cannot show it working in your own environment, it is not ready to submit.

2. Build a lab. Set up vulnerable applications like DVWA, Juice Shop, HackTheBox, or TryHackMe. Practice in controlled environments before testing live targets. AI can help you understand concepts here — that is legitimate.

3. Document everything. Strong reports include step-by-step reproduction, screenshots, impact analysis, and a clear explanation of the vulnerability class. This takes time. That time is what separates real researchers from noise.

4. Use AI as a tool, not a shortcut. AI can help you understand a CVE, explain how a vulnerability class works, or polish your report language. It cannot replace actually finding the bug.

5. Call it out. If you are part of hacker communities and you see people openly discussing AI-spam strategies, push back. The reputation of the entire community is at stake.

6. Focus on quality over quantity. One valid medium-severity report submitted per month is worth more — to your reputation and your wallet — than fifty invalid AI-generated submissions.

The Bigger Picture: What This Means for AI in Security

The fake bug bounty report crisis is a preview of a larger challenge. As AI tools become more capable and more accessible, every professional field that relies on verified human judgment is going to face some version of this problem.

Medical diagnosis. Legal research. Financial advising. Software security.

In each case, AI can accelerate the work of someone who already knows what they are doing. And in each case, AI in the wrong hands — without oversight, without verification, without accountability — produces outputs that look authoritative but are fundamentally untrustworthy.

Bug bounty programs are a microcosm. The lesson they are learning right now is going to matter for every other field trying to integrate AI responsibly.

The answer is not to reject AI. The answer is to demand that AI output be verified by human expertise before it is treated as real.

A Note to Beginners Reading This

If you are new to hacking and bug bounty, this article probably feels a little uncomfortable. Maybe you have used AI to help write a report. Maybe you have wondered if there is a faster way.

Here is the honest truth: there is no shortcut to real skill. The researchers making $50,000, $100,000, or more per year from bug bounty got there through years of genuine learning. They understand web applications deeply. They think creatively about how systems break. They have a mental model of attack surfaces that no AI prompt can replace.

AI can help you learn faster. It can explain concepts, answer questions, and help you understand what you are looking at. That is valuable. Use it that way.

But the actual skill? That comes from doing the work. From setting up labs, breaking things intentionally, reading write-ups from researchers who came before you, and slowly building intuition that no one can fake.

The bug bounty community wants more good researchers. We have enough noise.

🔥 Poll: What Do You Think?

Should AI-generated reports be banned from bug bounty programs?

- ✅ Yes — no AI reports, period. Human verification only.

- ⚡ No — ban the abuse, not the tool.

- 🤔 Need better verification requirements, not a ban.

- 💬 It is complicated — let me explain in the comments.

Drop your answer and your take. This debate is just getting started.

Want to Go From Noise to Signal? Start Here.

The difference between a hacker who gets paid and one who gets ignored is not talent. It is training, community, and the right resources at the right time.

📚 Read more, learn more, hack smarter: Head over to bugitrix.com for deep-dive blogs, technical breakdowns, and content built for hackers who take this seriously. No fluff. No AI-generated filler. Real security content from people who actually do this.

📲 Get daily cybersecurity tips, breaking news, and vulnerability alerts: Join thousands of hackers on the Bugitrix Telegram Channel → t.me/bugitrix. New content every day. Free forever.

🤝 Connect with real hackers: The noise is everywhere. The signal is in the community. Join the Bugitrix Hacker Community Forum to ask questions, share findings, get feedback on your reports, and build the network that actually moves careers.

🎓 Want someone to guide your journey personally? The Bugitrix mentorship program pairs you with experienced security researchers who have walked this road before. Stop guessing. Start growing. Apply for Mentorship →

📄 Ready to turn your skills into a career? A good resume gets you interviews. A great hacking resume gets you hired. Build your security resume with us →

The bug bounty ecosystem is worth fighting for. Real researchers, real bugs, real security. That is what this community is about. See you in the trenches.